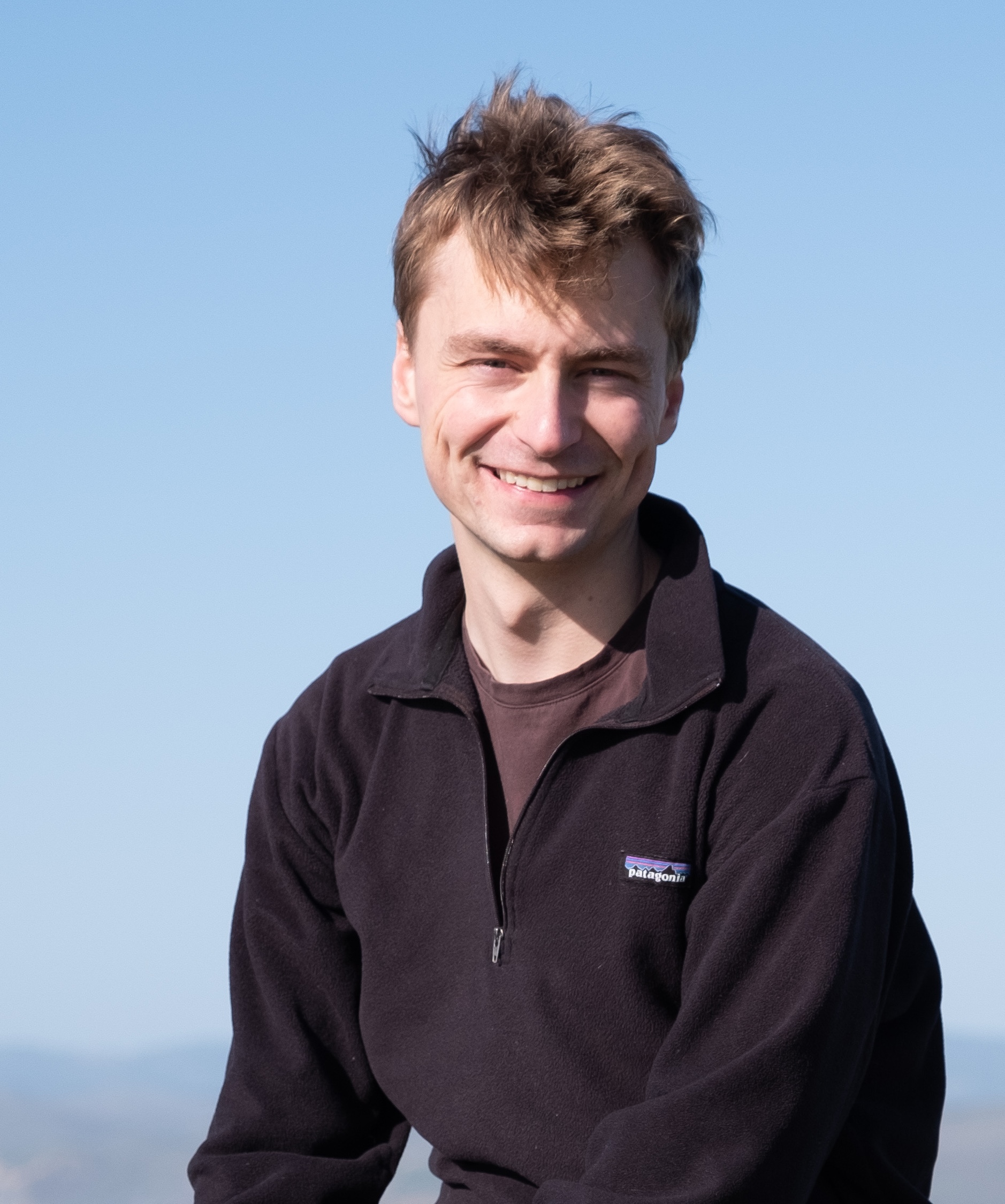

Hello!

I'm a Research Scientist at the Astera Institute / Simplex.

I recently completed my PhD in the Department of Physics at MIT. My PhD work was aimed at understanding deep neural networks—understanding the internal mechanisms that networks learn and how and why they learn them. I've done work on neural scaling (neural scaling), on grokking (here, here) and on the structure of neural network representations (here, here). My PhD thesis, Decomposing Deep Neural Network Minds into Parts, is available here.

Before my PhD, I studied math at UC Berkeley. During my undergrad, I worked with radio astronomers on SETI, Erik Hoel on deep learning theory, and Adam Gleave at CHAI on AI safety.

If you'd like to chat about research or life, feel free to schedule something here.

My email is eric.michaud99@gmail.com. I am on Twitter @ericjmichaud_. Here also is my GitHub and a CV. And here is my Google Scholar page.

Selected Works

- On neural scaling and the quanta hypothesis

- The Quantization Model of Neural Scaling (NeurIPS 2023)

- The space of LLM learning curves

- A Physics of Systems that Learn

- On the creation of narrow AI: hierarchy and nonlocality of neural network skills (NeurIPS 2023)

Podcasts

- Interpretability and AI Scaling with Eric Michaud (August 2025)

- AI in Academia with Eric Michaud (March 2025)

- Eric Michaud—Scaling, Grokking, Quantum Interpretability (July 2023)

Selected Talks

- The Quantization Model of Neural Scaling, 5th Workshop on Neural Scaling Laws: Emergence and Phase Transitions, July 2023.

- The Quantization Model of Neural Scaling, MIT Department of Physics "The Impact of chatGPT and other large language models on physics research and education" workshop, July 2023.

- Omnigrok: Grokking Beyond Algorithmic Data, ICLR in Kigali, Rwanda, May 2023

Papers

- There Will Be a Scientific Theory of Deep Learning. Jamie Simon, Daniel Kunin, Alexander Atanasov, Enric Boix-Adserà, Blake Bordelon, Jeremy Cohen, Nikhil Ghosh, Florentin Guth, Arthur Jacot, Mason Kamb, Dhruva Karkada, Eric J. Michaud, Berkan Ottlik, Joseph Turnbull. 2026.

- Understanding sparse autoencoder scaling in the presence of feature manifolds. Eric J. Michaud, Liv Gorton, Tom McGrath. Mechanistic Interpretability Workshop at NeurIPS, 2025.

- On the creation of narrow AI: hierarchy and nonlocality of neural network skills. Eric J. Michaud, Asher Parker-Sartori, Max Tegmark. NeurIPS, 2025.

- Open Problems in Mechanistic Interpretability. Lee Sharkey, Bilal Chughtai, Joshua Batson, Jack Lindsey, Jeff Wu, ..., Arthur Conmy, Neel Nanda, Jessica Rumbelow, Martin Wattenberg, Nandi Schoots, Joseph Miller, Eric J. Michaud, Stephen Casper, Max Tegmark, William Saunders, David Bau, Eric Todd, ..., Jesse Hoogland, Daniel Murfet, Tom McGrath. TMLR, 2025.

- Physics of Skill Learning. Ziming Liu, Yixuan Liu, Eric J. Michaud, Josh Gore, Max Tegmark. 2025.

- Efficient Dictionary Learning with Switch Sparse Autoencoders. Anish Mudide, Joshua Engels, Eric J. Michaud, Max Tegmark, Christian Schroeder de Witt. ICLR, 2025.

- The Geometry of Concepts: Sparse Autoencoder Feature Structure. Yuxiao Li*, Eric J. Michaud*, David D. Baek*, Joshua Engels, Xiaoqing Sun, Max Tegmark. Entropy 27(4), 344, 2024.

- Not all language model features are one-dimensionally linear. Joshua Engels, Eric J. Michaud, Isaac Liao, Wes Gurnee, Max Tegmark. ICLR, 2025.

- Survival of the Fittest Representation: A Case Study with Modular Addition. Xiaoman Delores Ding, Zifan Carl Guo, Eric J. Michaud, Ziming Liu, Max Tegmark. 2024.

- Sparse Feature Circuits: Discovering and Editing Interpretable Causal Graphs in Language Models. Sam Marks, Can Rager, Eric J. Michaud, Yonatan Belinkov, David Bau, Aaron Mueller. ICLR (Oral), 2025.

- Opening the AI Black Box: Distilling Machine-Learned Algorithms into Code. Eric J. Michaud*, Isaac Liao*, Vedang Lad*, Ziming Liu*, et al. Entropy 26(12), 1046, 2024.

- Open Problems and Fundamental Limitations of Reinforcement Learning from Human Feedback. Stephen Casper, Xander Davies, Claudia Shi, Thomas Krendl Gilbert, ..., Max Nadeau, Eric J. Michaud, Jacob Pfau, ..., Anca Dragan, David Krueger, Dorsa Sadigh, Dylan Hadfield-Menell. TMLR, 2023.

- The Quantization Model of Neural Scaling. Eric J. Michaud, Ziming Liu, Uzay Girit, Max Tegmark. NeurIPS, 2023.

- Precision Machine Learning. Eric J. Michaud, Ziming Liu, Max Tegmark. Entropy 25(1), 175, 2023.

- Omnigrok: Grokking Beyond Algorithmic Data. Ziming Liu, Eric J. Michaud, Max Tegmark. ICLR (Spotlight), 2023.

- Towards Understanding Grokking: An Effective Theory of Representation Learning. Ziming Liu, Ouail Kitouni, Niklas Nolte, Eric J. Michaud, Max Tegmark, Mike Williams. NeurIPS (Oral), 2022.

- Examining the Causal Structures of Deep Neural Networks Using Information Theory. Scythia Marrow*, Eric J. Michaud*, Erik Hoel. Entropy 22(12), 1429, 2020.

- Understanding Learned Reward Functions. Eric J. Michaud, Adam Gleave, Stuart Russell. Deep RL Workshop, NeurIPS, 2020.

- Lunar Opportunities for SETI. Eric J. Michaud, Andrew Siemion, Jamie Drew, Pete Worden. 2020.